In today’s data driven world, organizations generate massive amounts of information every second from customer transactions and social media interactions to IoT sensor readings and application logs. But raw data alone is worthless. Someone needs to collect, transform, and organize this information into usable formats. That’s where data engineers come in, serving as the architects and builders of the data infrastructure that powers modern business intelligence and machine learning initiatives.

Table of Contents

ToggleKey Takeaways

- Data engineers design and build the infrastructure and pipelines that collect, store, and process large volumes of data for analysis

- The role bridges IT and analytics, requiring expertise in programming, database management, cloud platforms, and distributed computing systems

- Data engineering jobs are in high demand with competitive salaries averaging $120,000-$160,000 annually in the United States

- Big data technologies like Apache Spark, Hadoop, Kafka, and cloud data warehouses are essential tools in a data engineer’s toolkit

- Career growth is strong with opportunities to advance into senior engineering roles, data architecture, or management positions

Understanding Data Engineering: The Foundation of Modern Analytics

Data engineering is the practice of designing, building, and maintaining the systems and architecture that enable organizations to collect, store, process, and analyze data at scale. Think of data engineers as the construction workers and plumbers of the data world they build the pipelines and infrastructure that allow data to flow smoothly from its source to the analysts, scientists, and business users who need it.

Unlike data scientists who focus on extracting insights from data, or data analysts who interpret data to answer business questions, data engineers concentrate on the “how” of data management. They ensure that data is:

- Accessible: Available when and where it’s needed

- Reliable: Accurate, consistent, and trustworthy

- Scalable: Capable of handling growing data volumes

- Secure: Protected from unauthorized access or breaches

- Performant: Delivered quickly enough to support real time or near real time use cases

The rise of big data has made data engineering one of the most critical roles in technology organizations. As companies increasingly rely on data driven decision making and artificial intelligence, the demand for skilled data engineers continues to surge.

Core Responsibilities of a Data Engineer

1. Designing and Building Data Pipelines

The primary responsibility of a data engineer is creating data pipelines automated workflows that move data from source systems to destination systems while performing necessary transformations along the way. These pipelines might:

- Extract data from databases, APIs, file systems, or streaming sources

- Transform data by cleaning, validating, aggregating, or enriching it

- Load data into data warehouses, data lakes, or analytical databases

This ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) process forms the backbone of modern data infrastructure.

2. Developing and Maintaining Data Architecture

Data engineers design the overall data architecture for their organizations, making critical decisions about:

- Which databases and storage systems to use

- How to structure and organize data

- Whether to use batch processing, stream processing, or both

- How to partition and index data for optimal performance

- What data governance and security measures to implement

3. Optimizing Data Systems for Performance

As data volumes grow, performance optimization becomes crucial. Data engineers continuously monitor and improve:

- Query performance and execution times

- Storage costs and efficiency

- Pipeline reliability and error handling

- System scalability and resource utilization

4. Ensuring Data Quality and Reliability

Data quality directly impacts business decisions and analytical outcomes. Data engineers implement:

- Validation rules and data quality checks

- Error handling and alerting mechanisms

- Data lineage tracking to understand data origins

- Testing frameworks for data pipelines

- Monitoring and observability tools

5. Collaborating with Cross-Functional Teams

Data engineers work closely with:

- Data scientists to provide clean, structured data for machine learning models

- Data analysts to build reporting infrastructure and dashboards

- Software engineers to integrate data systems with applications

- Business stakeholders to understand data requirements and priorities

- DevOps teams to implement best practices for cloud environments

Essential Skills for Data Engineering Jobs

Technical Skills

| Skill Category | Key Technologies & Concepts |

|---|---|

| Programming Languages | Python, Java, Scala, SQL, Bash |

| Big Data Frameworks | Apache Spark, Hadoop, Kafka, Flink |

| Databases | PostgreSQL, MySQL, MongoDB, Cassandra, Redis |

| Cloud Platforms | AWS (S3, Redshift, EMR), Azure (Synapse, Data Factory), GCP (BigQuery, Dataflow) |

| Data Warehousing | Snowflake, Redshift, BigQuery, Databricks |

| Orchestration Tools | Apache Airflow, Luigi, Prefect, Dagster |

| Containerization | Docker, Kubernetes |

| Version Control | Git, GitHub, GitLab |

Soft Skills

Beyond technical expertise, successful data engineers possess:

- Problem solving abilities: Breaking down complex data challenges into manageable solutions

- Communication skills: Explaining technical concepts to non-technical stakeholders

- Attention to detail: Ensuring data accuracy and pipeline reliability

- Continuous learning: Staying current with rapidly evolving technologies

- Collaboration: Working effectively across diverse teams

The Data Engineering Workflow: From Source to Insight

Stage 1: Data Ingestion

Data engineers connect to various data sources including:

- Transactional databases (MySQL, PostgreSQL)

- Application APIs

- Log files and event streams

- Third-party data providers

- IoT devices and sensors

- Web scraping and crawling

They build robust ingestion mechanisms that handle different data formats (JSON, CSV, Parquet, Avro) and delivery patterns (batch, real time streaming, micro batch).

Stage 2: Data Storage

Once collected, data needs appropriate storage. Data engineers choose between:

- Data warehouses: Structured, optimized for analytics (Snowflake, Redshift)

- Data lakes: Raw data storage in native formats (S3, Azure Data Lake)

- Data lakehouses: Combining warehouse and lake capabilities (Databricks, Delta Lake)

- Operational databases: For application needs (MongoDB, Cassandra)

Stage 3: Data Processing and Transformation

Raw data rarely arrives in analysis ready format. Data engineers implement transformations to:

- Clean and deduplicate records

- Standardize formats and values

- Join data from multiple sources

- Aggregate and summarize information

- Calculate derived metrics

- Apply business logic and rules

Stage 4: Data Serving

Finally, processed data must be accessible to consumers through:

- SQL query interfaces

- REST APIs

- Business intelligence tools (Tableau, Power BI, Looker)

- Machine learning platforms

- Real time dashboards and applications

Big Data Technologies: The Data Engineer’s Toolkit

Apache Spark

Apache Spark offers:

- In memory computing for faster processing

- Support for batch and streaming workloads

- Libraries for SQL, machine learning, and graph processing

- APIs in Python, Scala, Java, and R

Apache Kafka

Kafka powers real time data streaming. Data engineers use it to:

- Build real time data pipelines

- Stream processing applications

- Event driven architectures

- Log aggregation at scale

Cloud Data Platforms

Cloud platforms have revolutionized data engineering by providing:

- Elastic scalability: Resources that grow with demand

- Managed services: Reduced operational overhead

- Pay-as-you-go pricing: Cost optimization

- Global availability: Data centers worldwide

Modern Data Stack

The “modern data stack” refers to cloud-native, best of breed tools:

- Ingestion: Fivetran, Airbyte, Stitch

- Warehousing: Snowflake, BigQuery, Redshift

- Transformation: dbt (data build tool)

- Orchestration: Airflow, Prefect

- Visualization: Looker, Tableau, Metabase

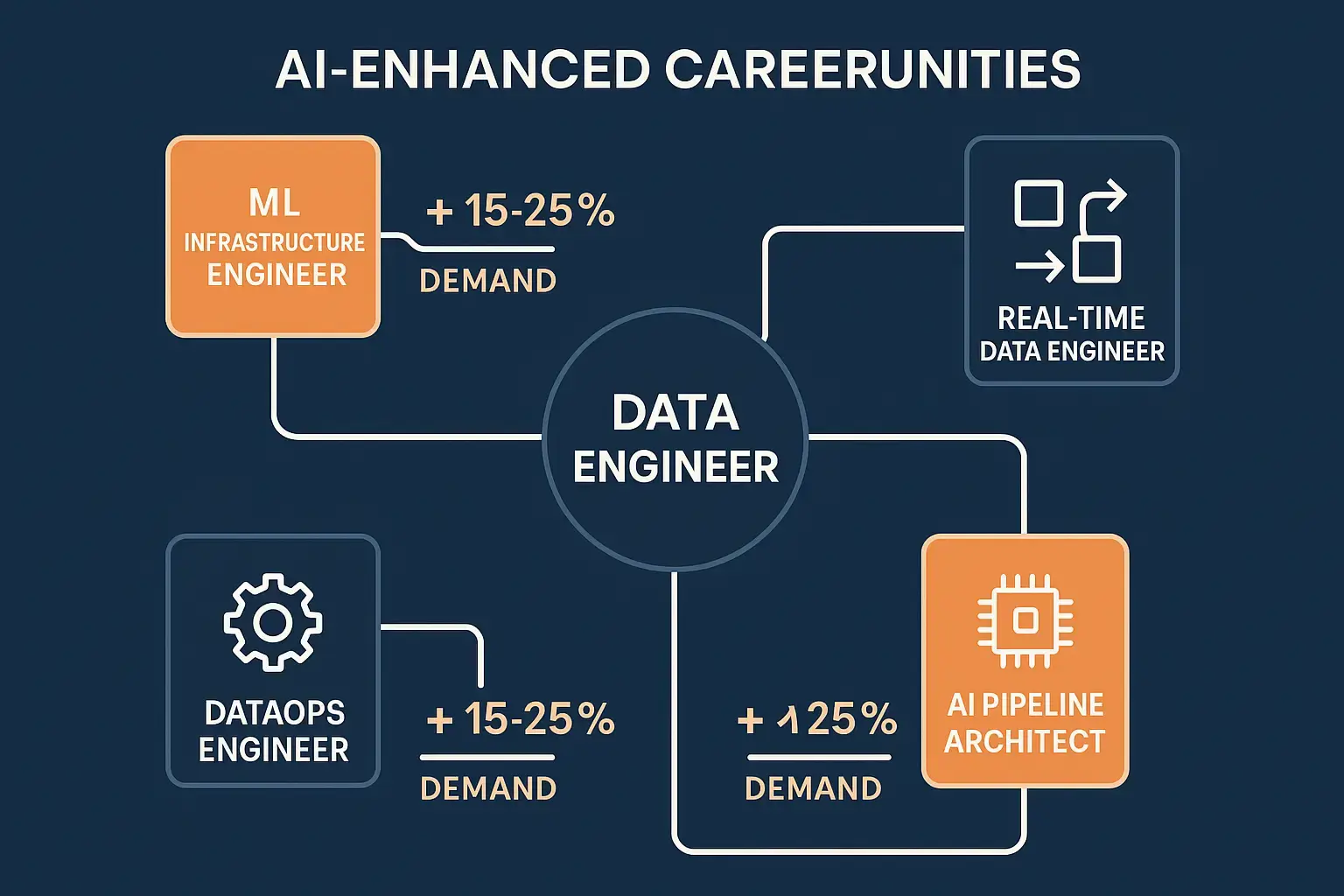

Career Path and Data Engineering Jobs

Entry Level Positions

- Junior Data Engineer: Building pipelines under supervision

- ETL Developer: Focusing on data integration

- Database Developer: Managing database systems

- Analytics Engineer: Bridging analytics and engineering

Typical requirements:

- Bachelor’s degree in Computer Science, Engineering, or related field

- Proficiency in SQL and at least one programming language

- Understanding of database concepts

- Familiarity with cloud platforms

Mid Level Roles

- Data Engineer: Full ownership of pipeline development

- Big Data Engineer: Specializing in large-scale distributed systems

- Cloud Data Engineer: Expertise in cloud-native architectures

- Platform Engineer: Building internal data platforms

Typical salary range: $100,000 – $150,000 (USD)

Senior and Leadership Positions

- Senior Data Engineer: Technical leadership and architecture

- Lead Data Engineer: Managing teams and projects

- Data Architect: Designing enterprise data strategies

- Director of Data Engineering: Organizational leadership

Typical salary range: $150,000 – $250,000+ (USD)

Industry Demand

- Data engineering jobs are among the fastest-growing in technology

- LinkedIn listed it as one of the top emerging jobs

- Demand grew 50%+ year over year from 2020-2024

- Every industry needs data engineers from finance and healthcare to retail and entertainment

- Remote work opportunities are abundant

Data Engineering vs. Related Roles

Data Engineer vs. Data Scientist

| Aspect | Data Engineer | Data Scientist |

|---|---|---|

| Primary Focus | Building data infrastructure | Extracting insights and building models |

| Key Skills | Software engineering, databases, pipelines | Statistics, machine learning, domain expertise |

| Tools | Spark, Kafka, Airflow, SQL | Python, R, scikit-learn, TensorFlow |

| Deliverables | Data pipelines, warehouses, APIs | Models, analyses, predictions |

Data Engineer vs. Data Analyst

Data analysts consume the data that engineers provide. While analysts focus on querying data and creating reports, engineers build the systems that make this possible.

Data Engineer vs. Software Engineer

- Software engineers build applications for end users

- Data engineers build data systems for internal consumers

- Overlap: Both use similar programming languages and DevOps best practices

Real World Applications and Use Cases

E commerce and Retail

- Real time inventory management: Tracking stock across locations

- Personalization engines: Powering product recommendations

- Customer 360 views: Unifying data from web, mobile, and in-store

- Fraud detection: Identifying suspicious transactions

Financial Services

- Risk modeling: Aggregating market and transaction data

- Regulatory reporting: Ensuring compliance with data requirements

- Trading systems: Processing market data in milliseconds

- Customer analytics: Understanding behavior and preferences

Healthcare

- Electronic health records: Integrating patient data from multiple systems

- Clinical research: Processing genomic and trial data

- Population health: Analyzing trends across patient populations

- Predictive analytics: Identifying at-risk patients

Media and Entertainment

- Content recommendations: Powering Netflix, Spotify, and YouTube suggestions

- Audience measurement: Tracking viewership and engagement

- Ad targeting: Delivering personalized advertising

- Content optimization: A/B testing and performance analysis

Challenges in Data Engineering

Data Quality Issues

- Missing or incomplete data

- Inconsistent formats and standards

- Duplicate records

- Outdated information

- Data drift over time

Scalability Concerns

- Increasing data volumes (terabytes to petabytes)

- Higher query concurrency

- More complex transformations

- Global distribution requirements

Technology Complexity

- Hundreds of competing tools and platforms

- Frequent version updates and breaking changes

- Integration challenges between systems

- Steep learning curves for new technologies

Organizational Challenges

- Unclear requirements from stakeholders

- Limited resources and budget constraints

- Legacy systems and technical debt

- Compliance and security requirements

- Cross-team coordination difficulties

Best Practices for Effective Data Engineering

1. Design for Scalability from Day One

- Use distributed architectures

- Partition data appropriately

- Implement caching strategies

- Plan for horizontal scaling

2. Implement Comprehensive Monitoring

- Track pipeline execution times

- Monitor data quality metrics

- Set up alerts for failures

- Log important events and errors

3. Prioritize Data Quality

- Validate data at ingestion

- Implement schema enforcement

- Document data definitions

- Test transformations thoroughly

4. Embrace Automation

- Use orchestration tools for scheduling

- Implement CI/CD for data pipelines

- Automate testing and validation

- Script common maintenance tasks

5. Document Everything

- Maintain data dictionaries

- Document pipeline logic

- Create architecture diagrams

- Write runbooks for common issues

6. Follow Security Best Practices

- Implement access controls

- Encrypt data at rest and in transit

- Mask or tokenize sensitive fields

- Audit data access regularly

The Future of Data Engineering

DataOps and Automation

- Automated testing and deployment

- Version control for data and code

- Continuous integration and delivery

- Collaboration between teams

Real-Time and Streaming

- Stream-first architectures

- Event-driven systems

- Real-time analytics and dashboards

- Instant data activation

AI and Machine Learning Integration

- Feature stores for ML models

- MLOps pipelines

- Model serving infrastructure

- Generative AI applications requiring robust data foundations

Serverless and Managed Services

- Serverless data processing (AWS Glue, Azure Functions)

- Fully managed warehouses

- Auto-scaling infrastructure

- Pay-per-query pricing models

Data Mesh and Decentralization

- Domain-oriented data ownership

- Self-service data infrastructure

- Federated governance

- Data as a product mindset

Emphasis on Data Governance

- GDPR, CCPA, and privacy compliance

- Data lineage and cataloging

- Access control and auditing

- Metadata management

How to Become a Data Engineer

Educational Pathways

Formal education:

- Computer Science or Engineering degree

- Data Science or Analytics programs

- Online courses and bootcamps

- Self-directed learning

Key subjects to study:

- Database systems and SQL

- Programming (Python, Java, Scala)

- Data structures and algorithms

- Distributed systems

- Cloud computing

Building Practical Experience

Hands on projects:

- Build an ETL pipeline processing public datasets

- Create a real time dashboard with streaming data

- Design a data warehouse schema

- Deploy a data pipeline to the cloud

- Contribute to open-source data tools

Portfolio development:

- Showcase projects on GitHub

- Write technical blog posts

- Create video tutorials

- Participate in hackathons

- Present at meetups

Certifications

Industry certifications can boost credibility:

- AWS Certified Data Analytics

- Google Cloud Professional Data Engineer

- Microsoft Certified: Azure Data Engineer Associate

- Databricks Certified Data Engineer

- Cloudera Certified Professional: Data Engineer

Networking and Community

Connect with the data engineering community:

- Attend conferences (DataEngConf, Spark Summit)

- Join online communities (Reddit, Discord, Slack)

- Participate in local meetups

- Follow industry leaders on social media

- Read technical blogs and publications

For more insights on technology careers and best practices, explore additional resources on the BitTech Solutions blog.

Conclusion: Building the Data Infrastructure of Tomorrow

The role of a data engineer has evolved from a niche technical position to a critical driver of business value in the modern digital economy. As organizations continue to recognize that data is their most valuable asset, the professionals who design, build, and maintain the infrastructure to harness that data become increasingly indispensable.

Data engineers serve as the essential bridge between raw information and actionable insights, enabling data scientists to build predictive models, analysts to generate reports, and business leaders to make informed decisions. Their work with big data technologies, cloud platforms, and distributed systems powers everything from personalized recommendations to fraud detection to medical breakthroughs.

For those considering data engineering jobs, the field offers:

- Strong career prospects with high demand across industries

- Competitive compensation reflecting the role’s strategic importance

- Intellectual challenges working with cutting edge technologies

- Tangible impact seeing your infrastructure enable business outcomes

- Continuous learning as the technology landscape evolves